Reranking

A second-stage retrieval technique that re-scores and reorders an initial set of retrieved documents using a more computationally expensive cross-encoder model to surface the most relevant results.

Why it matters

Adding a reranker to a RAG pipeline typically improves retrieval accuracy by 15-40% with only ~120ms additional latency — one of the highest-impact optimisations available.

Why Initial Retrieval Isn't Enough

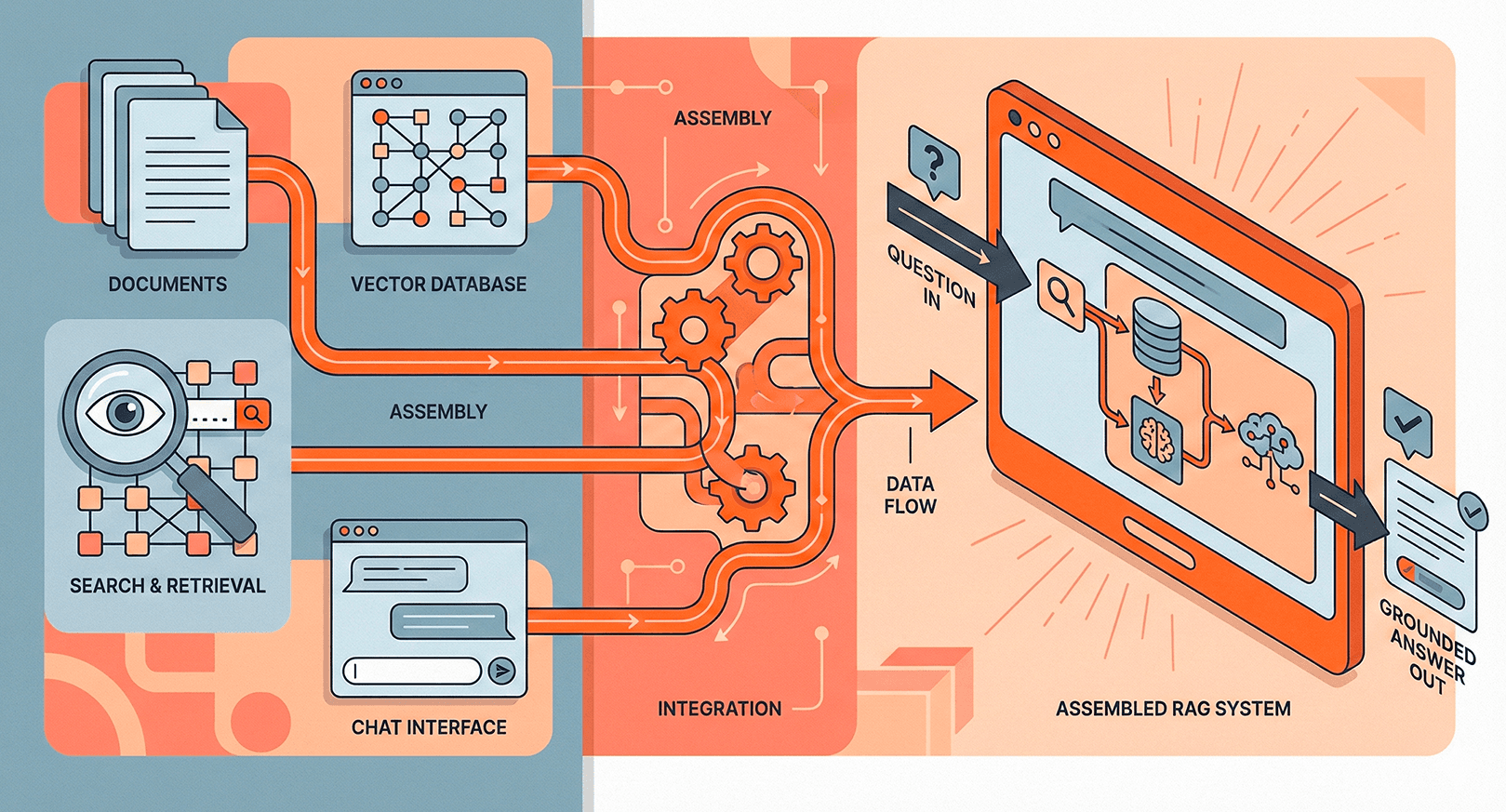

First-stage retrieval — whether keyword-based or semantic — uses bi-encoder models that embed queries and documents independently. This is fast (you can pre-compute all document embeddings) but lossy. Each piece of text gets compressed into a single vector, and subtle relevance signals get lost in the compression. A document might be highly relevant for a specific reason that the embedding doesn't fully capture.

The result is that your top 10 results from initial retrieval often include 3-4 passages that aren't actually the best matches. They scored well on approximate similarity but would fail a careful relevance check. In a RAG pipeline, these marginal results dilute the context window and can lead the LLM toward less accurate answers.

Cross-Encoders vs Bi-Encoders

Rerankers use cross-encoder models that process the query and document together as a single input, allowing full attention between every token in both texts. This means the model can detect nuanced relationships — negation, conditional relevance, partial matches — that bi-encoders miss.

- Bi-encoder (first stage) — Encodes query and document separately. Fast, scalable, but compressed representations lose detail.

- Cross-encoder (reranker) — Encodes query+document as a pair. Much more accurate but too slow to run against an entire corpus. Outputs a relevance score rather than an embedding.

This is why reranking is a second stage: you use fast bi-encoder retrieval to narrow millions of documents to ~100 candidates, then use the expensive cross-encoder to accurately rank just those candidates.

Practical Implementation

The standard pattern is retrieve-then-rerank: pull the top 50-100 candidates from your vector database, pass all of them through a reranker, and keep the top 5-10 for your LLM context. Popular reranking options include Cohere Rerank, Jina Reranker, and open-source models like BGE-reranker-v2.

Latency overhead is modest — typically 80-150ms for reranking 100 passages — and the accuracy improvement is substantial. Teams consistently report 15-40% gains in retrieval precision after adding a reranker, making it one of the highest-leverage improvements you can make to a RAG pipeline without changing your chunking strategy or embedding model.

Related terms