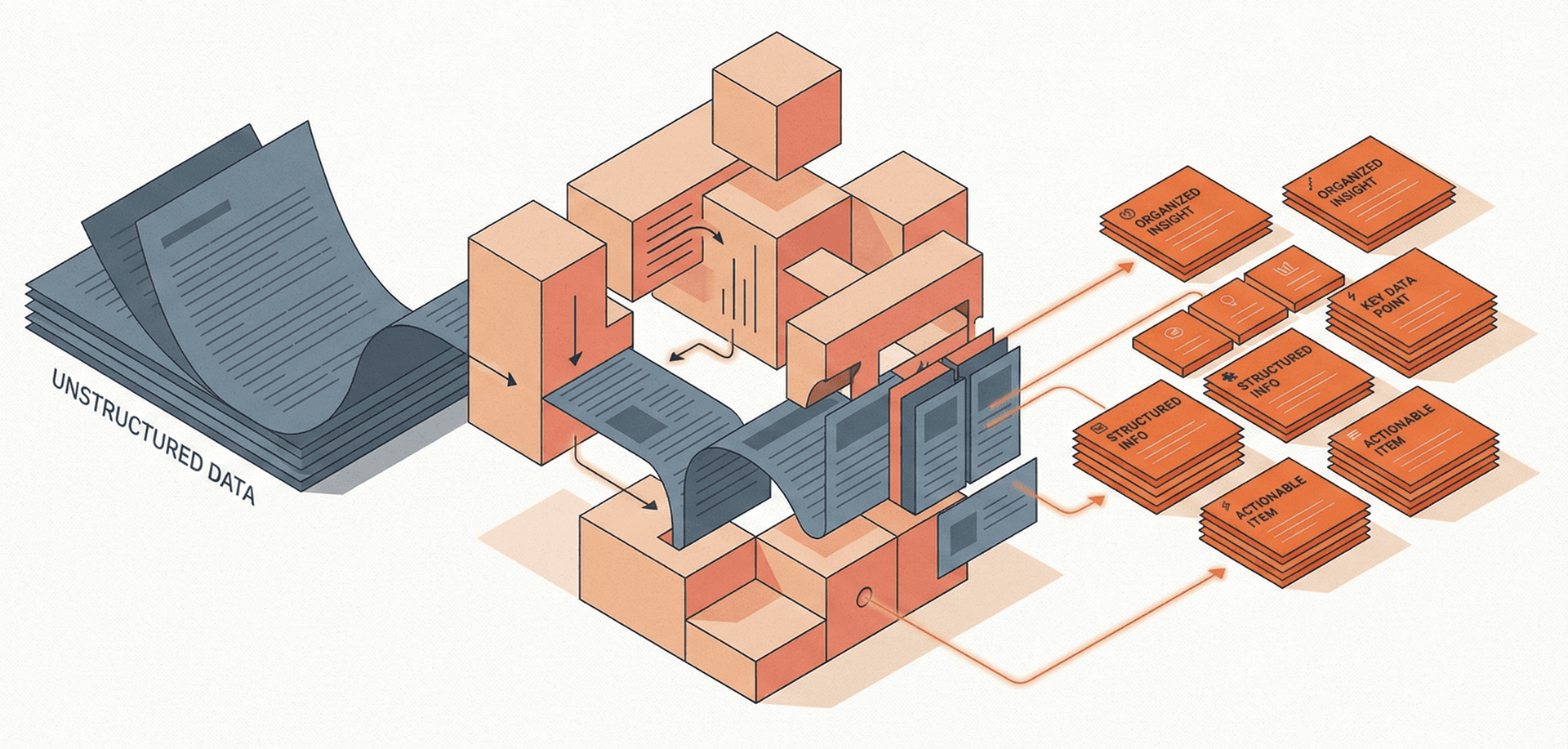

Chunking

The process of splitting documents into smaller, semantically meaningful segments optimised for embedding and retrieval in AI systems like RAG pipelines.

Why it matters

Chunking configuration has as much impact on RAG quality as the choice of embedding model — getting it wrong means retrieving irrelevant context and degrading AI responses.

Why Chunking Matters

Embedding models have context windows — typically 512 to 8,192 tokens — and they work best when the input is a focused passage about a single topic. Feed in an entire 30-page document and the resulting embedding becomes a blurred average of everything, useful for matching nothing in particular. Chunking solves this by breaking documents into pieces small enough to embed accurately and specific enough to retrieve precisely.

The stakes are real: chunk too large and you dilute the embedding signal, retrieving vaguely relevant blocks full of noise. Chunk too small and you lose the surrounding context that makes a passage meaningful, leaving the LLM with sentence fragments it can't reason over.

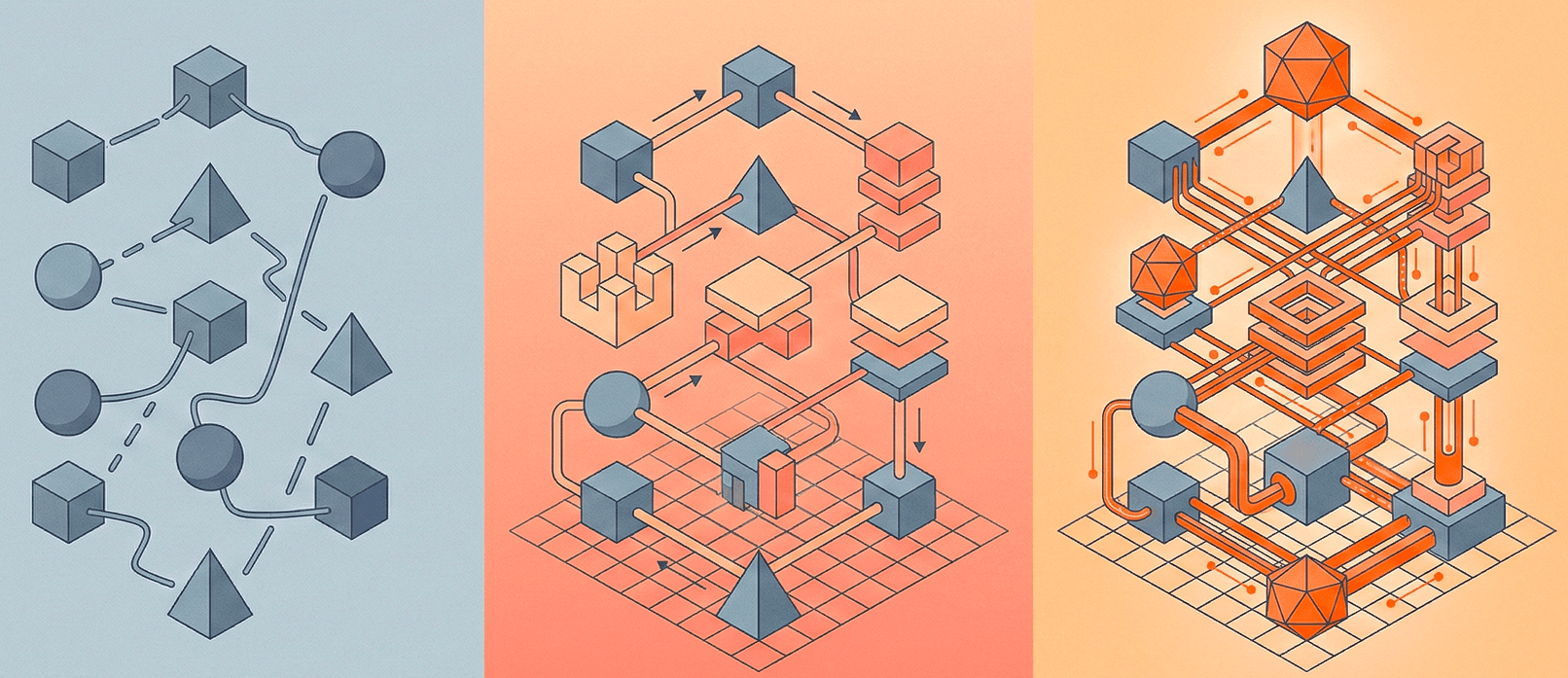

Key Strategies

- Fixed-size chunking — Split every N tokens with an overlap window. Simple and predictable, but ignores document structure entirely. A paragraph about two different topics gets treated as one unit.

- Recursive character splitting — The LangChain default. Splits on paragraph breaks first, then sentences, then words. Respects natural boundaries better than fixed-size.

- Sentence-level chunking — Groups complete sentences up to a token limit. Preserves grammatical coherence but may split related ideas across chunks.

- Semantic chunking — Uses embedding similarity between consecutive sentences to detect topic shifts and split at natural breakpoints. Highest quality but most computationally expensive.

Impact on RAG Quality

Chunk size and overlap are the two most impactful parameters. A common production starting point is 400-600 tokens with 50-100 token overlap. But the right setting depends on your content: technical documentation with dense terminology benefits from smaller chunks, while narrative content often needs larger ones to preserve reasoning chains.

Testing matters more than theory here. The best practice is to build an evaluation set of queries and expected source passages, then measure retrieval recall across different chunk configurations. Small changes — like adding a title prefix to each chunk or including metadata — can shift accuracy by 10-20%.

Related terms