Compound AI Systems

AI architectures that combine multiple models, retrievers, tools, and control logic to tackle tasks that no single model could reliably handle on its own.

Why it matters

The shift from "which model should I use?" to "how should I design the system?" is the most important mental model change for teams building production AI — scaling a model alone often yields worse ROI than engineering a better system.

Why single models aren't enough

Even the most capable LLMs have hard limitations: they hallucinate facts, their knowledge freezes at a training cutoff, they cannot access private data, and they cannot take actions in the real world. Scaling model size helps, but with diminishing returns. At some point, throwing more parameters at the problem costs more than engineering a better system around a capable-enough model.

This is the core insight behind compound AI systems: the system is the product, not the model. The model is one component among many.

What makes a system "compound"

A compound AI system has multiple interacting components that each handle a different part of the task:

- Models — one or more LLMs, potentially of different sizes or specialisations. A fast model handles simple routing while a more capable model tackles complex reasoning.

- Retrievers — vector search, keyword search, or knowledge graph lookups that feed relevant context into the model at inference time, grounding its outputs in real data.

- Tools — code executors, calculators, API clients, database connectors. Anything the model cannot do through text generation alone.

- Control flow — the logic that ties everything together: conditional branching, loops, retry policies, human-in-the-loop gates, and output validators.

Real-world examples

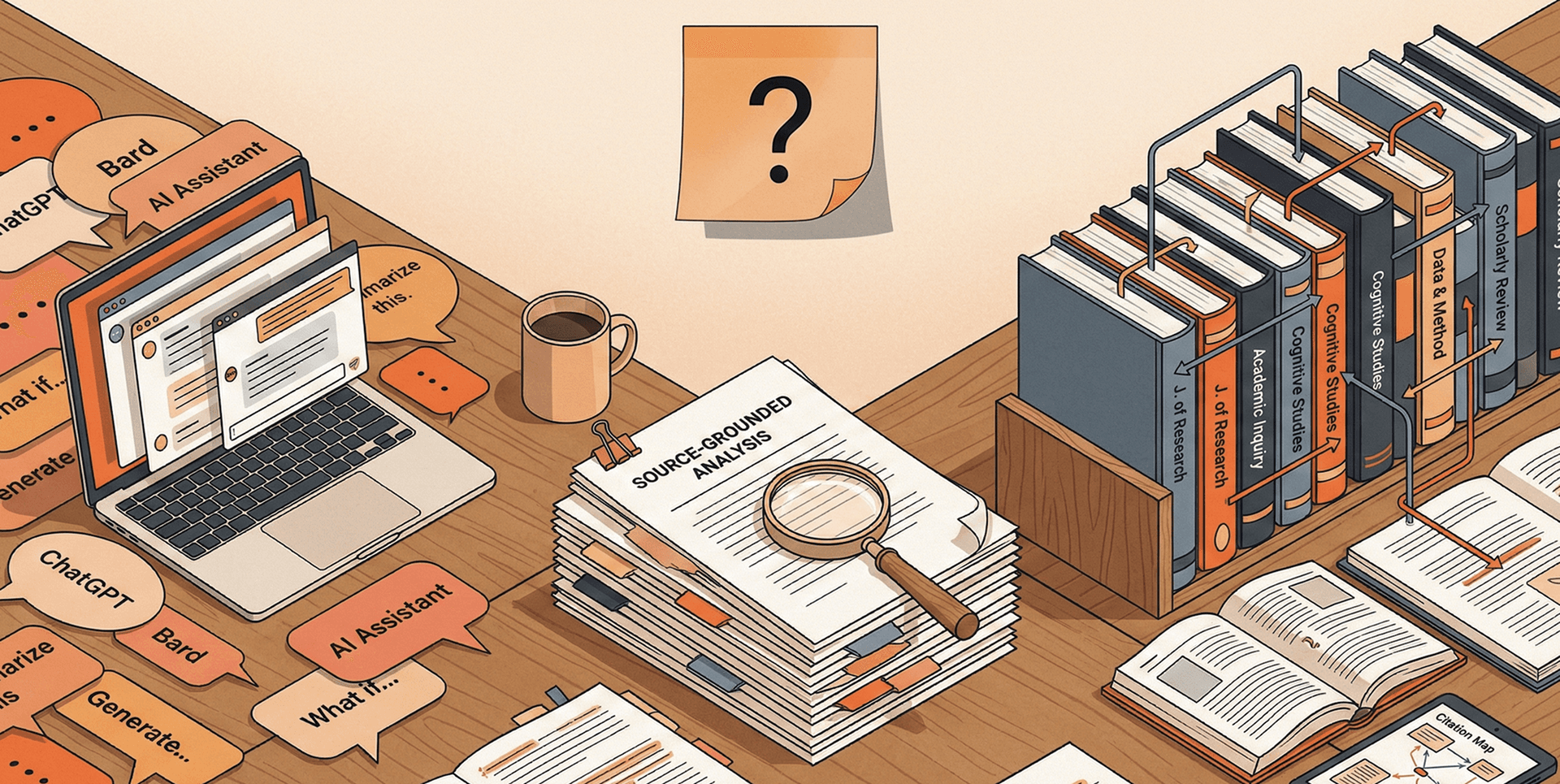

RAG systems are the most widespread compound AI pattern — a retriever fetches relevant documents, a model synthesises an answer grounded in those documents, and a validator checks for hallucination. Simple, but already far more reliable than a model answering from memory alone.

More sophisticated examples include agent pipelines where a planning model decomposes a task, specialist models handle subtasks, and an evaluator model checks the final output. DoorDash uses a compound system for fraud investigation that combines transaction analysis, pattern matching, and LLM-based reasoning. LinkedIn's support system routes queries through classification, retrieval, and generation stages with human escalation at confidence thresholds.

The common thread: each component does what it is best at, and the system is designed so that the weaknesses of one component are covered by the strengths of another.

Related terms