Back to Blogs

I Gave the Same 15 Sources to Three Different AI Models. They Found Completely Different Things

9 min readMar 8, 2026

AI Strategy

Rosh JayawardenaData & AI Executive

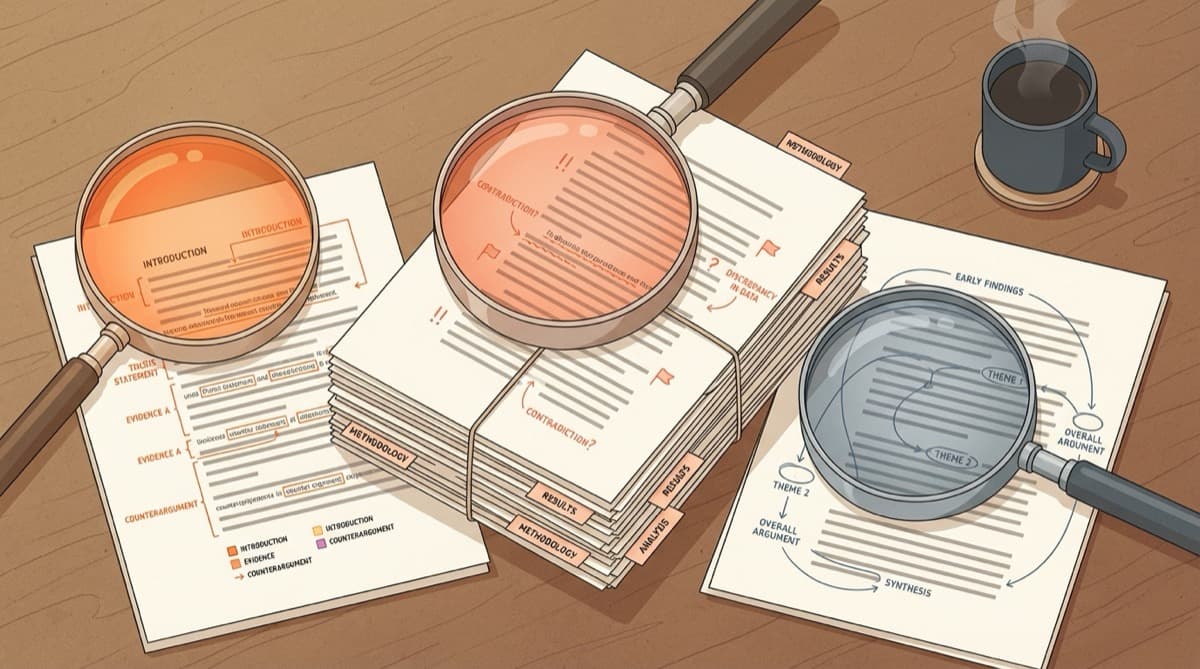

Different AI models find different things in the same documents. Here's what the research actually shows, and why model choice is a research methodology decision, not a feature checkbox.

I uploaded 15 research papers into three different AI models last month. Same PDFs. Same question: "What are the key tensions between these sources?"

Claude flagged a methodological disagreement between two papers that I'd missed in my own reading. GPT-4 produced a structured taxonomy of positions, cleanly categorised by theme. Gemini synthesised across the full corpus and pulled out a narrative thread connecting arguments I'd been thinking about separately.

Three models. Three different analyses. None of them were wrong. But each one missed things the others caught.

That got me thinking: if your AI research tool only offers one model, which perspective are you stuck with? And what are you missing?

The one-model default

Most AI research tools lock you to a single model, and most of us never think twice about it.

NotebookLM uses Gemini. ChatGPT uses GPT. When you upload your sources and ask a question, every answer you get is filtered through one model's training data, one set of reasoning patterns, one set of blind spots.

You're getting a single perspective on your research. You just don't realise it.

There's a reasonable counterargument here. Fly.io recently published a piece arguing that the future isn't model agnostic, that builders should go all-in on one model and optimise for it. For developer tools, there's a case to be made. When you're building a product, deep integration with one model can be a genuine advantage.

But research isn't product development. When you're trying to understand a complex topic from multiple angles, locking yourself to one analytical lens is a real limitation. It's like writing a literature review but only searching one database. You might find good stuff. You'll also miss good stuff, and you won't know what.

This isn't a fringe concern either. A Parallels survey of 540 IT professionals found that 94% of organisations are concerned about vendor lock-in, with nearly half saying they're "very concerned." (Worth noting: Parallels sells multi-cloud solutions, so they have skin in this game. But the scale of concern tracks with what I'm hearing anecdotally.)

But does this actually matter for research quality? Do different AI models find different things in the same documents?

The evidence: different models, different findings

From what I've seen: yes. And the gap is bigger than I expected.

A peer-reviewed study gave 83 cardiology questions to five AI models. Claude scored highest overall at 78.31%. But the interesting part was this: GPT-4 beat Claude on diagnostic investigations, scoring 87.5%. And Gemini led on cardiovascular pharmacology, also at 87.5%. Claude dominated on heart failure questions (100%) and arrhythmias (90.9%).

The differences weren't random. A Kruskal-Wallis test confirmed they were statistically significant. The "best model" changed depending on what subdomain you were asking about, within the same field of cardiology.

A separate study published in Nature reinforced the pattern. Same medical exam questions, three models. Claude led overall at 80% accuracy, GPT-4 at 64%, Gemini at 63%. Sounds like Claude wins, right?

Not quite. In prosthetic dentistry specifically, Gemini outperformed Claude, 51% to 45%. And when the same questions were asked in Polish, Gemini's accuracy dropped by 31%, while Claude stayed consistent across both languages.

The best model flips. It depends on the domain, the task, and even the language.

Beyond accuracy: how models think differently

The divergence goes deeper than which model gets more answers right. Each model reasons differently, and those differences matter for research.

Claude tends toward decision-oriented analysis. It flags constraints, notes what's ambiguous, and hedges when the evidence is mixed. In a Glean evaluation of document understanding, Claude achieved 94.2% accuracy on legal clause extraction, particularly on tasks where precision and nuance matter most.

GPT leans toward structured, categorical output. It's strong at breaking complex material into clean taxonomies and digestible segments. If you need to extract structured data from a pile of reports, GPT's instinct to categorise and segment works well.

Gemini handles volume. With a context window of 1 to 2 million tokens, it can process entire document collections that would exceed other models' limits. And its native multimodal processing means it handles scanned documents and images without external OCR, hitting 94% accuracy on scanned invoice extraction in a Koncile comparison.

These aren't just formatting preferences. They shape what gets pulled out of your sources and what stays buried.

The Stanford HAI AI Index confirms the broader pattern: the aggregate performance gap between the top AI models has narrowed from 11.9% to 5.4%. But drill into specific benchmarks and the story changes. On GPQA Diamond (graduate-level expert reasoning), scores range from 81% for GPT-5.3 to 94.3% for Gemini 3.1 Pro. A 13-point spread, despite overall convergence.

Models are getting closer on average. They remain quite different on specifics.

Model choice as research methodology

In traditional research, you wouldn't rely on a single analytical framework. You'd triangulate. You'd look at qualitative and quantitative data. You'd cross-reference sources and methods.

The same logic applies to AI-assisted research. Different models are different analytical lenses. Using only one is a methodological choice, whether you intended it or not.

The NC Bar Association made this explicit in 2025, advising legal professionals to compare outputs from multiple AI models. Not because any single model is unreliable, but because each one pulls out different aspects of the same legal question.

An MIT Sloan study found that only half of the performance gains from using a more advanced AI model come from the model itself. The other half comes from how users adapt their prompts to the model. Your model choice doesn't just shape the answer. It shapes your entire analytical approach.

And a Wharton study found something I reckon is worth sitting with: when researchers ran identical prompts 100 times on the same model, they found substantial variability in responses. Across different models, the divergence is even greater. This isn't a flaw to fix. It's a property to use deliberately.

Think about three practical scenarios:

Nuanced qualitative analysis. You're reviewing interview transcripts or policy documents where what's not said matters as much as what is. Claude's tendency to flag ambiguity and contested claims helps here.

Structured data extraction. You've got 30 reports and need to pull out consistent data points across all of them. GPT's categorical output style and structured segmentation suit this kind of task.

Massive corpus synthesis. You're working with 200+ sources and need to identify patterns across the full set. Gemini's context window lets you process what other models can't even load.

The researcher who can switch between these depending on the task has a methodological advantage over someone locked to a single model.

What to look for

If you're evaluating AI research tools, model flexibility deserves a spot on your criteria list. Not as a nice-to-have. As a methodology question.

Three things worth checking:

- Can you switch models without re-uploading your sources? If changing models means starting over, you won't do it.

- Can you ask the same question to different models and compare? This is where the useful insight comes from.

- Does the tool show your sources alongside the model's analysis? Model flexibility without source grounding is just getting different flavours of hallucination.

The market is moving this direction. Tools like Magai and Zine offer multi-model access. OpenRouter-based platforms, including Onsomble, let you choose your model per query. The trend is toward giving researchers the choice rather than making it for them.

One caveat: don't chase model count as a vanity metric. Having 150 model options doesn't make your research better. Having the ability to deliberately choose the right model for the right question does.

The bottom line

From where I sit, model choice isn't a technical feature. It's a research methodology decision.

When your tool locks you to one model, you're locked to one way of seeing your sources. One reasoning style. One set of blind spots. You won't know what you're missing until you look through a different lens.

The researchers doing the best AI-assisted work in 2026 won't be the ones with the "best" model. They'll be the ones who know which model to use for which question.

For a broader comparison of research workflows, see what happened when we tested 6 AI research approaches on the same project.

Continue Reading

AI Strategy10 min read

We Looked Into the Markdown-for-AI Theory. The Data Wasn't Kind.

Publishing Markdown mirrors of your web pages for AI search visibility is a waste of time. Here's why AI crawlers stick to HTML, and what you should focus on instead.

Rosh Jayawardena

AI Strategy12 min read

The 3% Problem: The AI Literacy Gap Hiding Behind Your Adoption Dashboard

Your AI adoption dashboard says 73%. Your team's output says otherwise. The enterprise AI problem has shifted from access to proficiency, and the gap is wider than most leaders think.

Rosh Jayawardena

AI Strategy8 min read

The AI Verification Triage: What to Always Check, What to Spot-Check, and What to Trust

92% of users don't verify AI outputs. Here's a framework for knowing when that's fine and when it'll burn you

Rosh Jayawardena

Deep dives, delivered weekly

AI patterns, workflow tips, and lessons from the field. No spam, just signal.