Back to Blogs

The AI Verification Triage: What to Always Check, What to Spot-Check, and What to Trust

8 min readFeb 13, 2026

AI Strategy

Rosh JayawardenaData & AI Executive

92% of users don't verify AI outputs. Here's a framework for knowing when that's fine and when it'll burn you

Fifty papers at ICLR 2026 contained hallucinated citations. These weren't obscure submissions from questionable sources. They were reviewed by three to five peer experts each. Several had average reviewer ratings of 8 out of 10. Without intervention, they would almost certainly have been published in the official proceedings of one of the world's top AI conferences.

The reviewers weren't careless. They were experts doing what experts do. The citations looked real. Real-sounding author names. Plausible paper titles. Fabricated ArXiv IDs that could pass a quick glance.

For the underlying reason models invent convincing facts in the first place, see our explainer on why AI invents facts.

You probably already knew AI could hallucinate. Everyone knows that by now. Yet according to an Exploding Topics study, 92% of users don't verify AI outputs. The other 8% who verify everything are burning time checking brainstorming lists and internal notes.

Both groups are missing something.

The problem isn't that people don't verify. It's that there's no clear framework for knowing what to verify. That's what I've found useful: a verification triage that prioritizes based on actual risk, not paranoia.

The Actual Error Rates

Before you can triage verification, you need to know how bad the problem actually is. Not the vague "AI sometimes makes mistakes" hand-waving, but the actual numbers.

They're worse than most people think, and better than the doom content suggests. What I've found is that it depends heavily on what you're asking and which tools you're using.

Legal AI Tools (Stanford HAI Study, 2025):

- Lexis+ AI: 17% hallucination rate

- Westlaw AI-Assisted Research: 33% hallucination rate

- General chatbots on legal queries: 58-82% hallucination rate

Academic Research (GPTZero, 2026):

- 2% of accepted NeurIPS 2025 papers contained at least one hallucinated citation

- 50+ ICLR 2026 submissions with fake citations that passed initial peer review

General AI Research:

- 60% of leading AI chatbots incorrectly cite their sources (University of Maryland)

- 45% error rate on factual queries (BBC study)

Real-World Consequences:

- 729+ documented cases of lawyers filing pleadings with fabricated AI-generated legal authorities

What do these numbers tell us? A few things.

First, specialized tools hallucinate less than general chatbots, but still hallucinate. Lexis+ AI at 17% is better than ChatGPT at 58-82% on legal queries, but one in six isn't a number I'd bet a client relationship on.

Second, the type of output matters quite a bit. Citations are particularly prone to hallucination. General summaries less so. Brainstorming outputs even less.

Third, peer review and human expertise don't catch these errors reliably. If five AI experts can miss a fake citation, so can I. So can you.

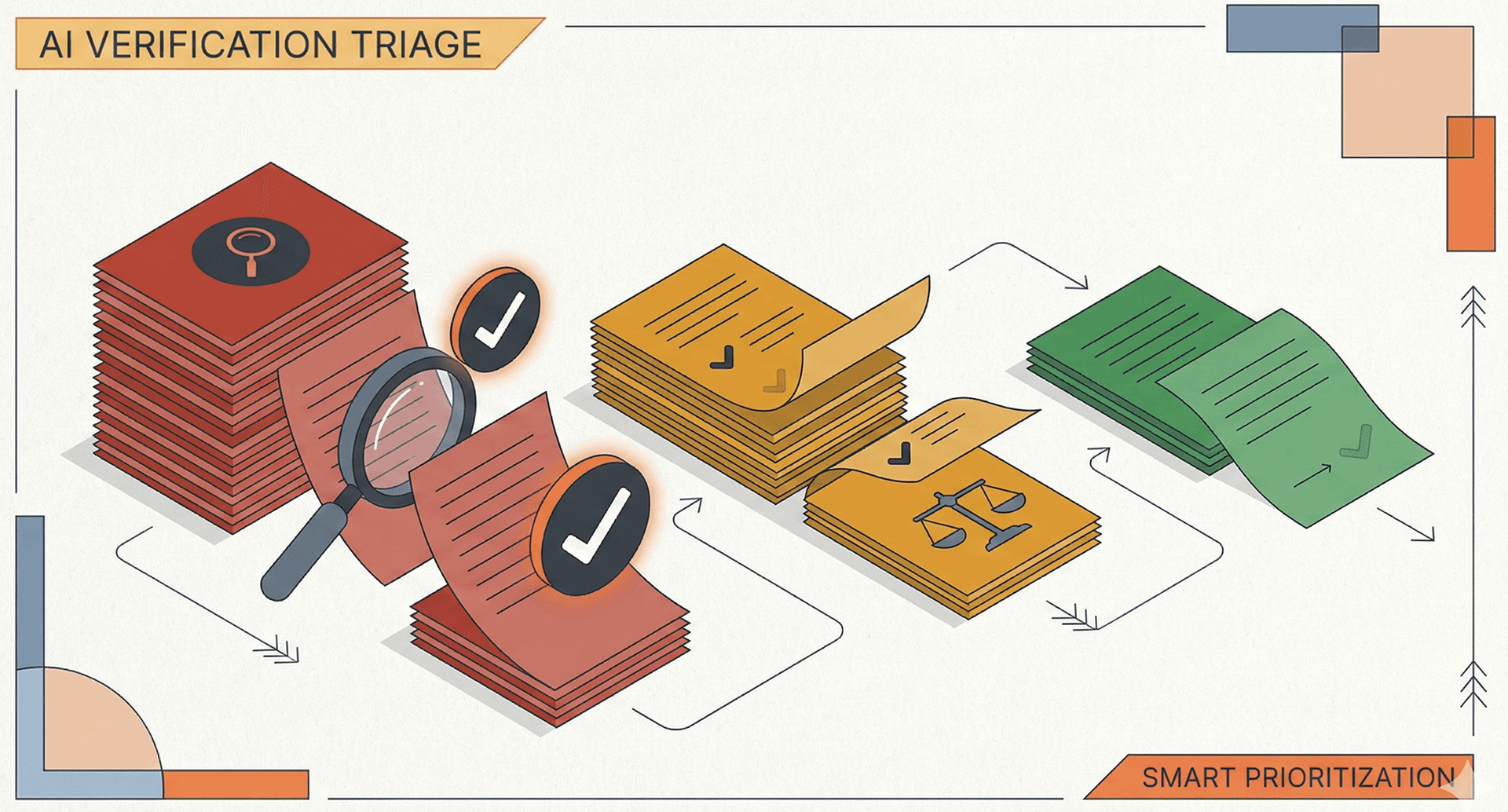

The Verification Triage Framework

Not all AI outputs carry equal risk. A hallucinated citation in a client deliverable is a professional disaster. A slightly off brainstorming idea is just noise to filter. Treating them the same wastes your time and attention.

Here's the framework I've settled on:

RED - Always Verify:

- Specific citations or source references

- Statistics and numerical claims

- Named individuals, companies, or organizations

- Anything going to clients, executives, or the public

- Legal, medical, or financial claims

- Direct quotes attributed to real people

YELLOW - Spot-Check:

- General claims about how things work

- Reasoning chains and explanations

- Summaries of material you already know

- Historical context or background information

- Comparisons between options

GREEN - Lower Risk:

- Brainstorming and ideation outputs

- First drafts and rough outlines

- Internal notes and working documents

- Format and structure suggestions

- Rewording or editing assistance

The logic behind this triage is fairly simple: verify where the cost of being wrong is high.

A fake citation in a client report damages your credibility and could expose you to professional liability. That's a red zone item. Always verify.

A slightly imprecise explanation of how machine learning works in an internal note? The cost of being wrong is low. That's green zone. Save your verification time for what matters.

A quick decision tree:

- Will this be seen by clients or the public? If yes → RED

- Does it claim something specific and verifiable? If yes → RED

- Is it summarizing or explaining something general? → YELLOW

- Is it just helping me think or draft? → GREEN

How to Verify Without Destroying Productivity

Knowing what to verify is half the challenge. The other half is doing it efficiently. Verification doesn't mean re-doing all the research yourself.

1. The Citation Existence Check (30 seconds)

Before using any citation, do a quick Google Scholar or database search for the exact title. If it doesn't exist, the AI made it up. This takes 30 seconds and catches the most damaging errors.

GPTZero found that hallucinated citations ranged from obvious (author names like "John Doe and Jane Smith") to sophisticated (real authors paired with fake paper titles). The existence check catches both.

2. Lateral Reading (2-3 minutes)

For factual claims, open two or three tabs and search for the same claim. If you can't find it independently stated anywhere credible, be suspicious. This technique comes from fact-checking journalism, and it works for AI outputs too.

Don't just search for confirmation. Search for the claim itself and see who else is making it.

3. Source Triangulation (1-2 minutes)

When AI cites a statistic, ask: where did this number originally come from? Trace it back. If the AI says "according to a 2024 McKinsey study" and you can't find that study, the number is suspect even if it sounds plausible.

This is where a lot of AI errors hide. The statistic might exist, but the attribution might be wrong. Or the number might be from a different year, a different sample, or a different context entirely.

4. Confidence Calibration

Pay attention to how the AI phrases things. Hedged statements ("some research suggests") often warrant less scrutiny than confident assertions ("the study found that 73% of users...").

Ironically, confident AI statements are the most dangerous. They're the ones most likely to be made up, and the ones you're most likely to believe.

The Uncomfortable Truth About "Verify Everything"

The standard advice to "verify everything" is well-intentioned but doesn't really work in practice.

It doesn't scale. If you're using AI to save time on research, and then spending that time verifying every output, you haven't saved anything. The 92% who don't verify aren't lazy or careless. They've implicitly recognized that verifying everything defeats the purpose of using AI in the first place.

But the solution isn't to verify nothing. It's to verify the right things.

The junior analyst who spends 30 minutes verifying a brainstorming list is wasting time on green zone outputs. The same analyst who sends unverified statistics to a client is courting disaster in the red zone.

The goal isn't verification theater. It's verification triage.

In practice: before you act on any AI output, take three seconds to mentally categorize it. Red, yellow, or green. Then let that categorization determine your next action.

Red zone output before a client meeting? Stop and verify. Green zone draft of internal talking points? Crack on.

Working With Imperfect Tools

AI research tools are imperfect. The Stanford study found Westlaw hallucinating on one in three queries. GPTZero found fake citations slipping past expert peer reviewers. The BBC found a 45% error rate on factual queries.

These aren't numbers that inspire blind trust.

But they're also not numbers that justify abandoning these tools entirely. The people who do well with AI probably won't be the ones who trust everything or verify everything. They'll be the ones who develop verification intuition: the instinct for knowing what needs checking and what doesn't.

That intuition starts with a framework.

The next time you use AI for research, try this: before acting on any output, explicitly categorize it. Say it out loud if you need to. "This is a red zone citation. I need to verify it exists." Or "This is green zone brainstorming. I'll use it as a starting point."

The 92% who don't verify aren't wrong. They're just missing a framework to know when it matters.

Now you have one.

Continue Reading

AI Strategy10 min read

We Looked Into the Markdown-for-AI Theory. The Data Wasn't Kind.

Publishing Markdown mirrors of your web pages for AI search visibility is a waste of time. Here's why AI crawlers stick to HTML, and what you should focus on instead.

Rosh Jayawardena

AI Strategy12 min read

The 3% Problem: The AI Literacy Gap Hiding Behind Your Adoption Dashboard

Your AI adoption dashboard says 73%. Your team's output says otherwise. The enterprise AI problem has shifted from access to proficiency, and the gap is wider than most leaders think.

Rosh Jayawardena

AI Strategy9 min read

I Gave the Same 15 Sources to Three Different AI Models. They Found Completely Different Things

Different AI models find different things in the same documents. Here's what the research actually shows, and why model choice is a research methodology decision, not a feature checkbox.

Rosh Jayawardena

Deep dives, delivered weekly

AI patterns, workflow tips, and lessons from the field. No spam, just signal.