Evaluation & Safety

Hallucination

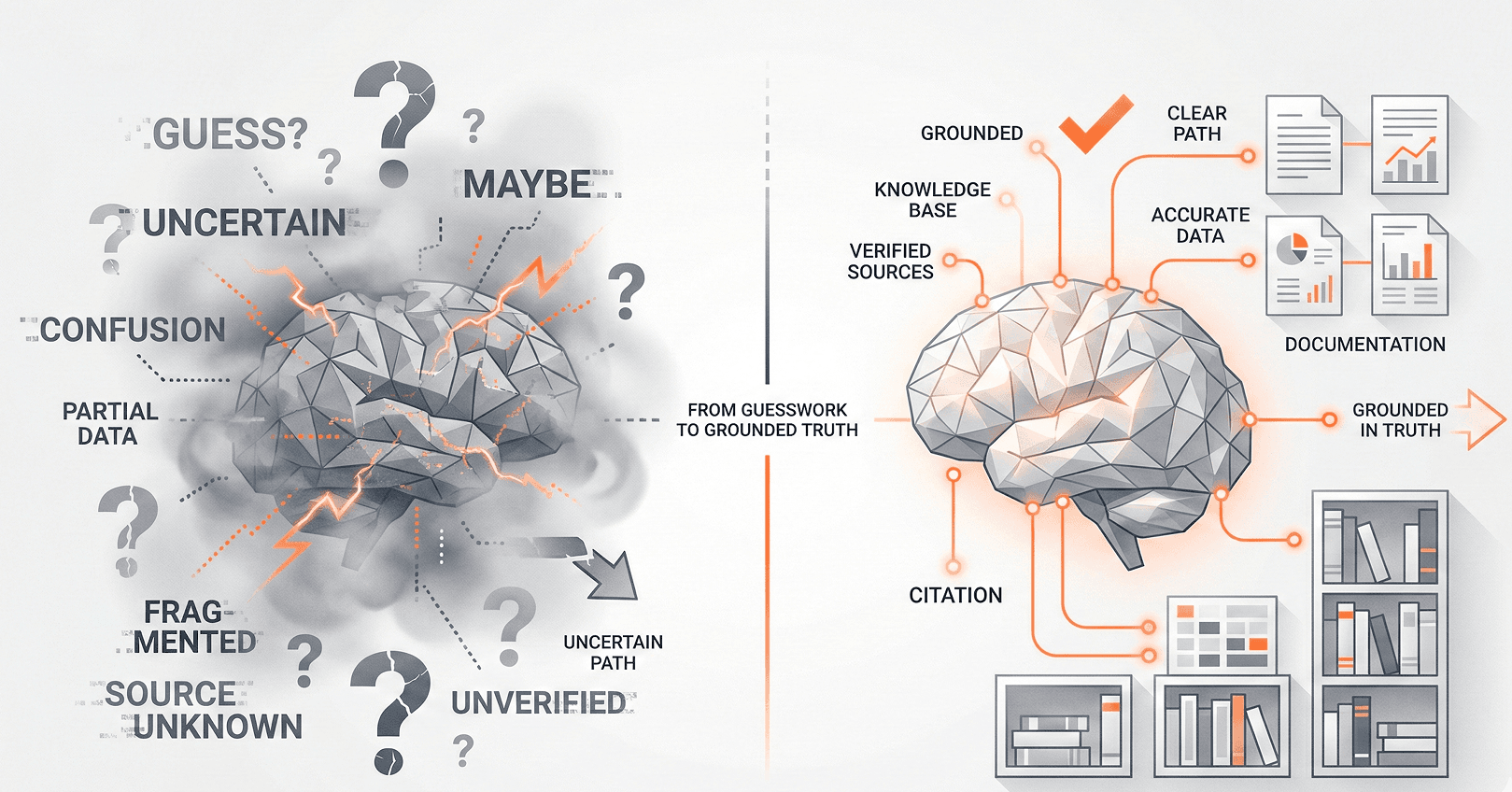

When an AI model generates information that sounds plausible but is factually incorrect, fabricated, or unsupported by its training data or provided context.

Why it matters

Hallucination is the primary barrier to deploying AI in high-stakes domains like healthcare, legal, and finance. Understanding and mitigating it is essential for production AI.

Why models hallucinate

LLMs are fundamentally next-token predictors. They generate text that is statistically likely, not necessarily true. When the model lacks sufficient knowledge or context, it fills gaps with plausible-sounding fabrications rather than admitting uncertainty.

Types of hallucination

- Factual fabrication — inventing facts, citations, or statistics that don't exist.

- Context contradiction — generating output that contradicts the provided context or documents.

- Instruction drift — gradually departing from the user's instructions over long outputs.

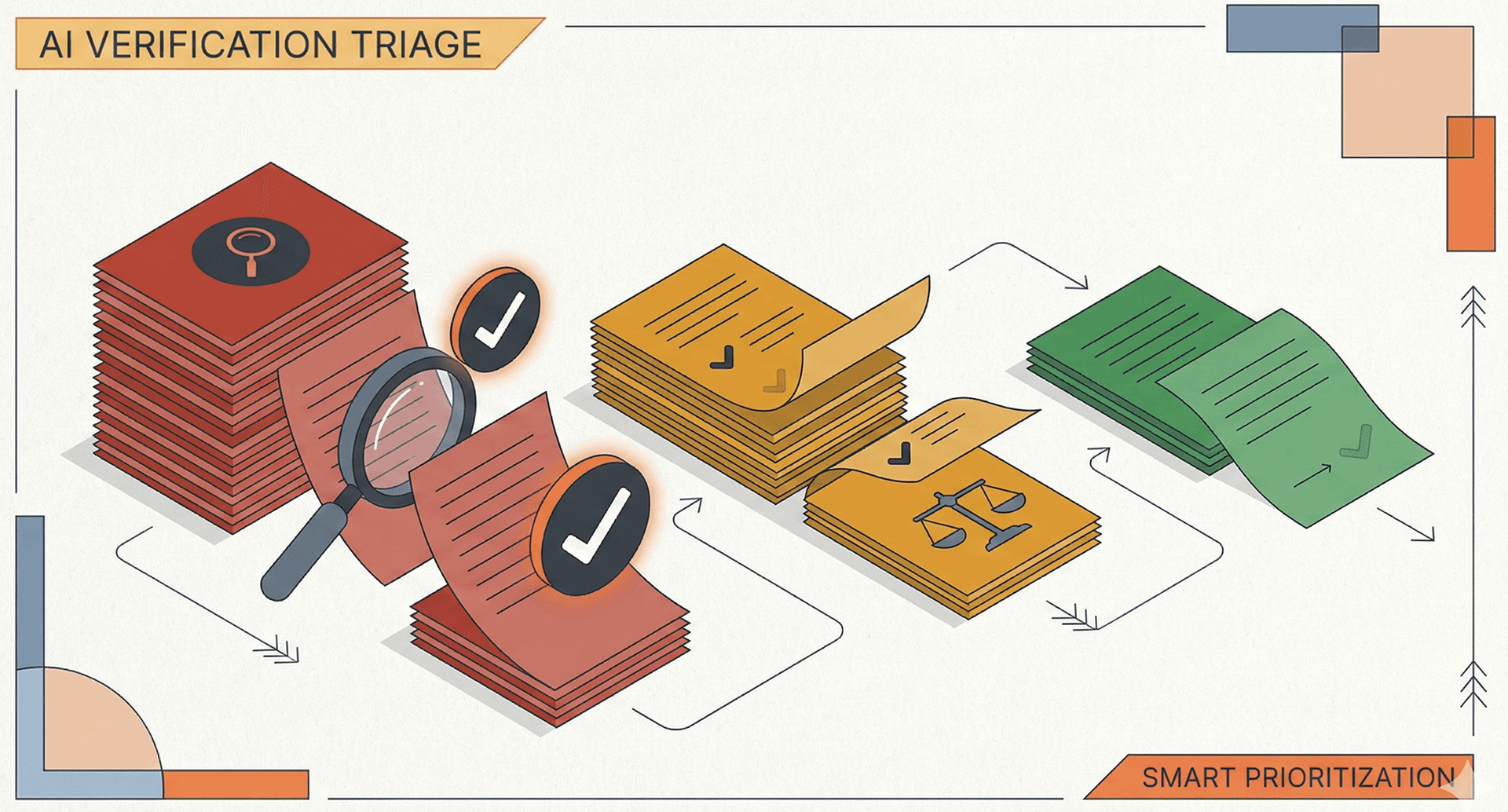

Mitigation strategies

- RAG — ground responses in retrieved documents.

- Citations — require the model to cite specific sources for claims.

- Confidence calibration — train models to express uncertainty.

- Guardrails — post-generation fact-checking and consistency validation.

Related terms

Retrieval-Augmented Generation(RAG)A technique that grounds a language model's output in external data by retrieving relevant documents before generating a response.Large Language Model(LLM)A neural network trained on massive text corpora that can understand and generate human language, typically with billions of parameters.GuardrailsProgrammatic constraints placed around AI model inputs and outputs to prevent harmful, off-topic, or policy-violating behavior.