Generative AI

Artificial intelligence that creates new content — text, images, video, audio, or code — by learning patterns from existing data and producing original outputs in response to prompts.

Why it matters

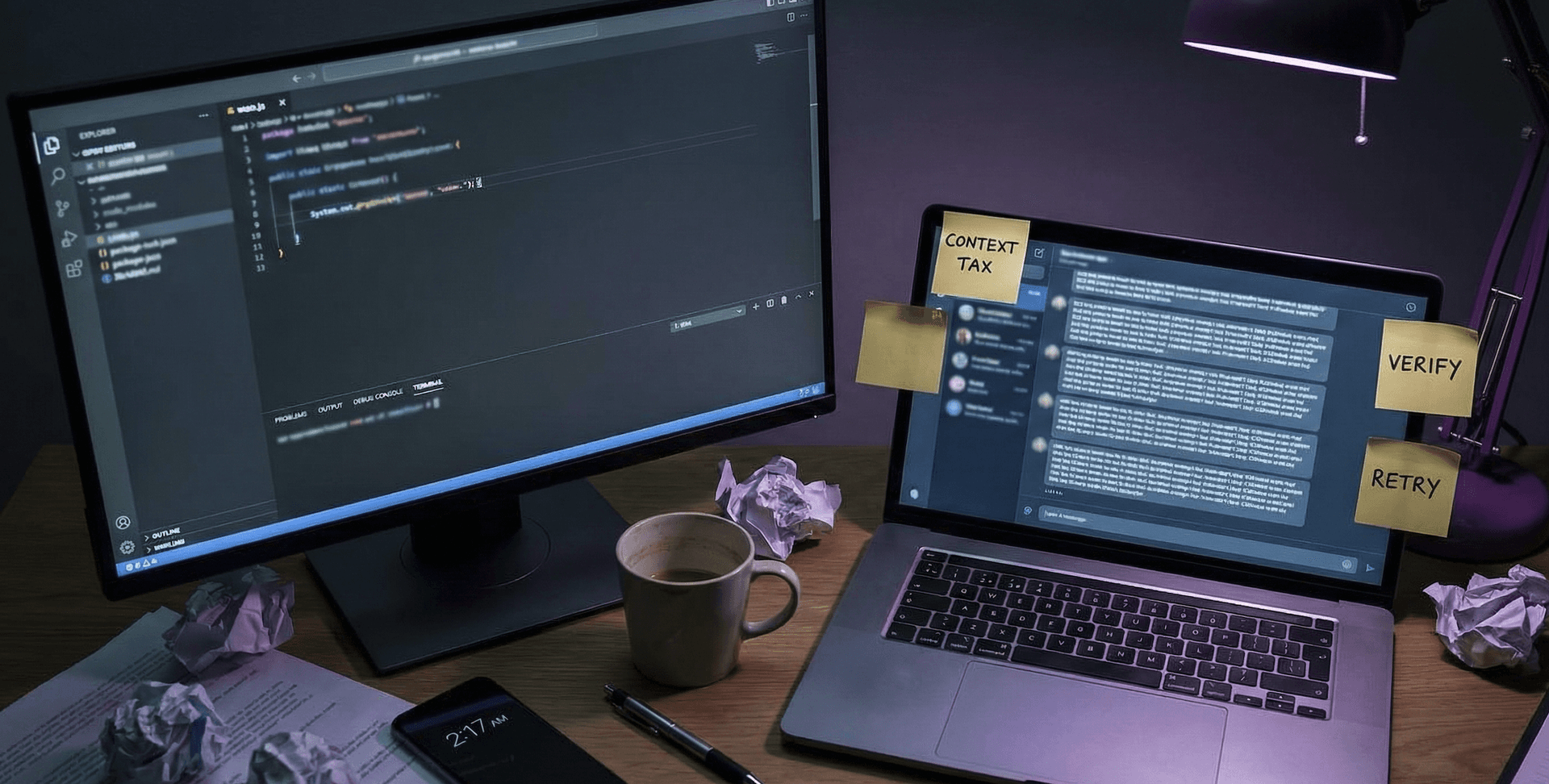

Generative AI is reshaping how teams create content, write code, and analyse information. Understanding its capabilities and limitations is essential for using it effectively — and for avoiding the costly mistakes that come from treating it as a fact-checking tool or autonomous decision-maker.

How it works

Generative AI models use neural networks to learn statistical patterns from massive training datasets. During inference, they generate new content by predicting the most likely next element — whether that's the next word in a sentence, the next pixel in an image, or the next note in a melody. The model doesn't copy from its training data; it creates novel combinations based on learned distributions.

The two dominant architectures are transformers (for text, code, and increasingly other modalities) and diffusion models (for images and video). Both convert random noise or incomplete sequences into coherent outputs through iterative refinement.

Key modalities

- Text generation — Large language models like GPT, Claude, and Gemini produce written content, answer questions, and write code.

- Image generation — Diffusion models like Stable Diffusion, DALL-E, and Midjourney create images from text descriptions.

- Audio and video — Emerging tools generate music, voice, and video from text prompts, though quality and control are still maturing.

- Code generation — Specialised models autocomplete, translate, and generate code across programming languages.

What it doesn't do

Generative AI doesn't verify facts, reason about truth, or understand the world the way humans do. It produces statistically plausible outputs, which means it can confidently generate incorrect information (hallucinations). It also can't reliably assess data quality or replace domain expertise — it's a powerful complement to human judgment, not a substitute for it.

Related terms

On the AI Radar